6 Min. Lesezeit

Vibe Coding vs. Agentic Engineering in 2026: Which One Survives Production?

Eighteen months ago, letting an AI write your code felt like a party trick. You'd paste a prompt into a chat window, watch a function materialize, and share the screenshot on Twitter. By early 2025, Andrej Karpathy gave the practice a name, vibe coding, and suddenly half the industry had permission to stop reading the output. Today, AI-assisted development is the default workflow for most engineering teams, and the question has shifted from "should we use it?" to "how do we keep it from collapsing under its own weight?"

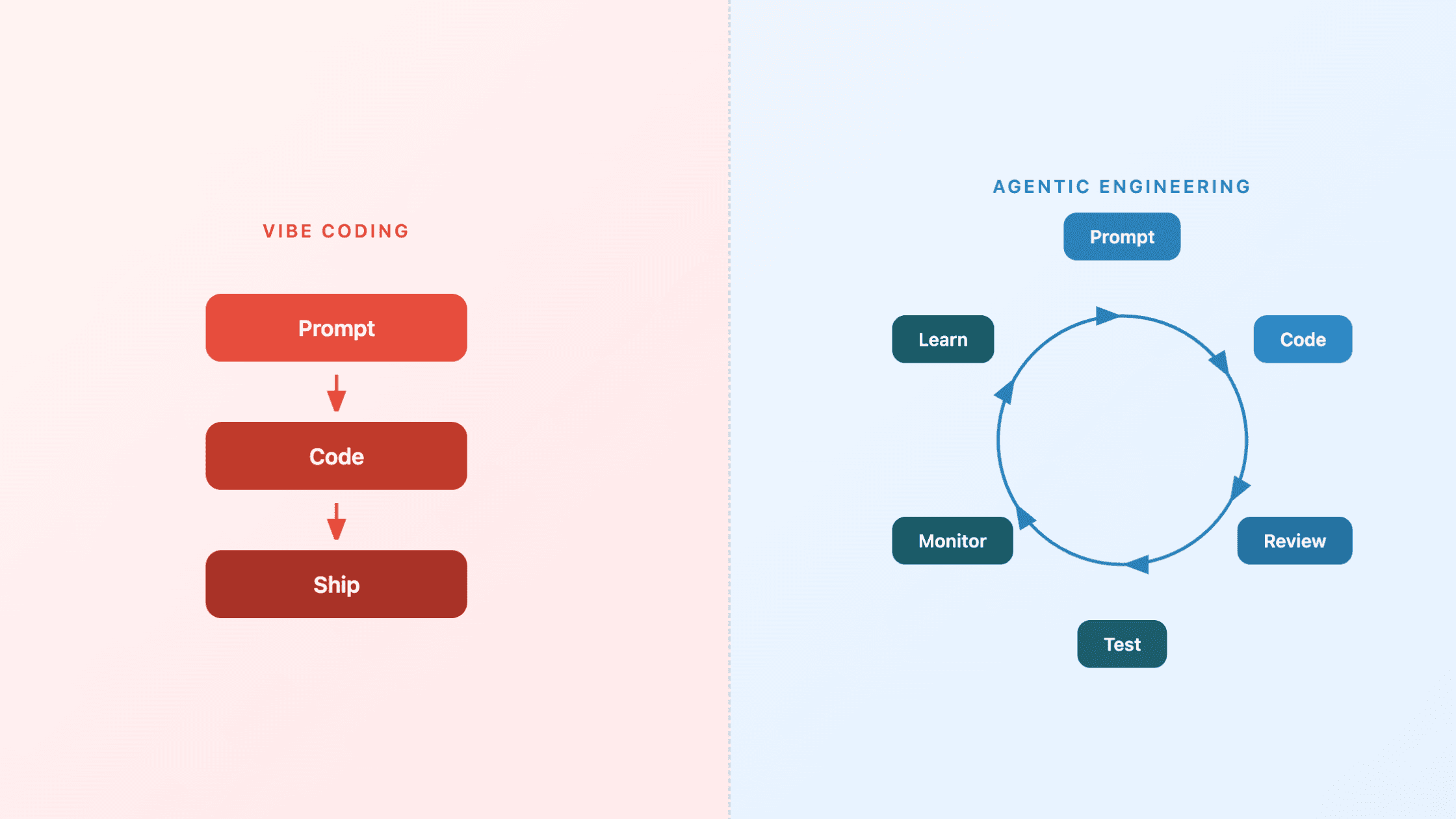

That question splits the industry into two camps. One camp vibes. The other engineers. The difference between them is the difference between a demo that earns applause and a system that survives its first quarter in production.

What vibe coding actually is

Karpathy's original description was disarmingly honest: you have a natural language conversation with an AI, accept the code it produces, run it, and if something breaks, you paste the error back in and let the AI fix it. No manual code review. No architecture planning. You "fully give in to the vibes" and let the model carry you.

The approach works brilliantly within a narrow band. Prototypes, personal tools, hackathon entries, weekend projects, and learning exercises all benefit from the speed of vibe coding. When the stakes are low and the audience is you, skipping the review cycle is a rational trade-off. You trade rigor for velocity and walk away with something functional in an afternoon.

The problem starts when that afternoon project gets promoted. Someone puts it in front of a customer. Someone else asks for a feature. A third person needs to debug it six weeks later without any architectural context. At that point, the vibes stop working.

What agentic engineering looks like

Agentic engineering is what happens when professionals use AI as a force multiplier while retaining full responsibility for architecture, quality, and judgment. The AI generates code, drafts tests, suggests refactors, and accelerates every stage of the development lifecycle. But a human stays in the loop for design decisions, security review, and system-level reasoning.

This is not a slower version of vibe coding. It is a fundamentally different operating model. Vibe coding optimizes for immediate output. Agentic engineering optimizes for correctness, maintainability, and long-term system health. The AI does more of the mechanical work, but the engineer still owns the outcome.

The distinction matters because 78% of knowledge workers now use AI agents weekly, according to Microsoft's 2026 Work Trend Index. AI-assisted work is no longer an experiment. It is the production environment. And production environments need engineering discipline, not vibes.

Where vibe coding breaks

The failure mode is predictable and well-documented. Simon Willison, one of the most respected voices in the developer community, recently admitted that he had stopped reviewing AI-generated production code and recognized the pattern as "normalization of deviance" — the gradual acceptance of lower standards until something fails. Willison's honesty is useful because it shows that even experienced, disciplined developers drift when the output looks plausible enough.

The data backs up the concern. An Oxford study on fine-tuning AI systems for user-friendly behavior found that optimizing for warmth made the model 60% more likely to give wrong answers, increasing error rates by 7.43 percentage points. Models that feel right are not necessarily models that are right. In vibe coding, the developer's primary quality signal is "does this look reasonable?" — exactly the kind of surface-level check that friendly, confident AI output is designed to pass.

Even the best models have hard ceilings. GPT-5.5 scored 75% on OSWorld desktop automation tasks, matching the human baseline. That sounds impressive until you consider the inverse: a 25% failure rate on routine tasks. For a prototype, one-in-four failures is a curiosity. For a production system processing thousands of transactions, it is a liability.

The production gap nobody planned for

The entire software development lifecycle was designed around a core assumption: writing code is slow and expensive. Code review, testing, deployment pipelines, documentation requirements — all of these exist because producing code used to be the bottleneck that gave teams time to think.

That bottleneck is gone. AI generates code faster than any team can review it, and the infrastructure built around slow production has not caught up. The new bottlenecks sit upstream and downstream. Upstream, the quality of specifications and design decisions determines whether the AI produces the right thing. Downstream, evaluation, testing, and monitoring determine whether the right thing stays right over time.

This creates a subtle but dangerous gap. The traditional signals of code quality — clean commit history, passing test suites, up-to-date documentation — no longer reliably indicate that a human understood what was built. An AI can produce all of those artifacts without any human ever reasoning about the system's behavior under edge cases. The risk of silent failure compounds quickly when no one is watching for it.

What enterprises actually need

For organizations deploying AI agents into real workflows, the answer is not "don't use AI for code" or "review everything manually." Both extremes fail at scale. The answer is agentic engineering backed by three operational requirements.

Continuous accuracy monitoring. AI agents operating in production need ongoing measurement against ground truth, not a one-time evaluation at deployment. Models drift. Data distributions change. A system that performed well in March may silently degrade by May. Self-learning systems that detect and adapt to these shifts outperform static deployments by a wide margin.

Automated feedback loops. When an AI agent makes a mistake, that error needs to flow back into the system's learning cycle without requiring a human to manually retrain or patch. This is the difference between AI agents that improve over time and AI agents that repeat the same mistakes at scale. The feedback loop is what turns a tool into a team member.

Explainable decision trails. Every action an AI agent takes in a production environment should be traceable. Not for compliance theater, but because debugging a system you cannot inspect is expensive and slow. When a human-AI team can see why an agent made a decision, they can correct course in minutes instead of days.

What to do now

If your team is vibe coding prototypes and internal tools, keep going. The speed gains are real, and the risk profile is appropriate for throwaway work.

If your team is shipping AI-generated code to production or deploying AI agents into business-critical workflows, apply the agentic engineering model. That means three immediate changes:

First, reinstate human review for production paths. Not every line needs manual inspection, but every system-level decision, security boundary, and data flow should have a human who can explain why it works.

Second, build evaluation into the deployment pipeline, not after it. Accuracy metrics, regression tests, and behavioral checks should gate deployment the same way unit tests do today. If you cannot measure whether your agent is performing correctly, you cannot claim it is performing correctly.

Third, treat agent monitoring as a first-class operational concern. The same attention your team gives to uptime and latency needs to extend to agent accuracy and decision quality. A platform with built-in governance and continuous learning removes most of the manual overhead here.

Vibe coding gave the industry a visceral demonstration of what AI can produce. Agentic engineering is how that production capacity becomes trustworthy. The organizations that figure out the second part while everyone else is still impressed by the first will own the next five years of AI deployment.

The vibes were fun. Now build something that lasts.