8 min read

70% of AI Agent Success Is Organizational, Not Technical. Here's What Most Enterprises Get Wrong.

Enterprises spent $2.52 trillion on AI in 2026. A 44% increase over the previous year. The models are better than ever. The tools are more accessible. The infrastructure is mature.

And yet, Forrester predicts that enterprises will defer 25% of planned AI spend into 2027 because they can't demonstrate ROI. Only 1 in 10 AI agent pilots makes it to production. 73% of CIOs regret a major AI vendor decision made in the last 18 months.

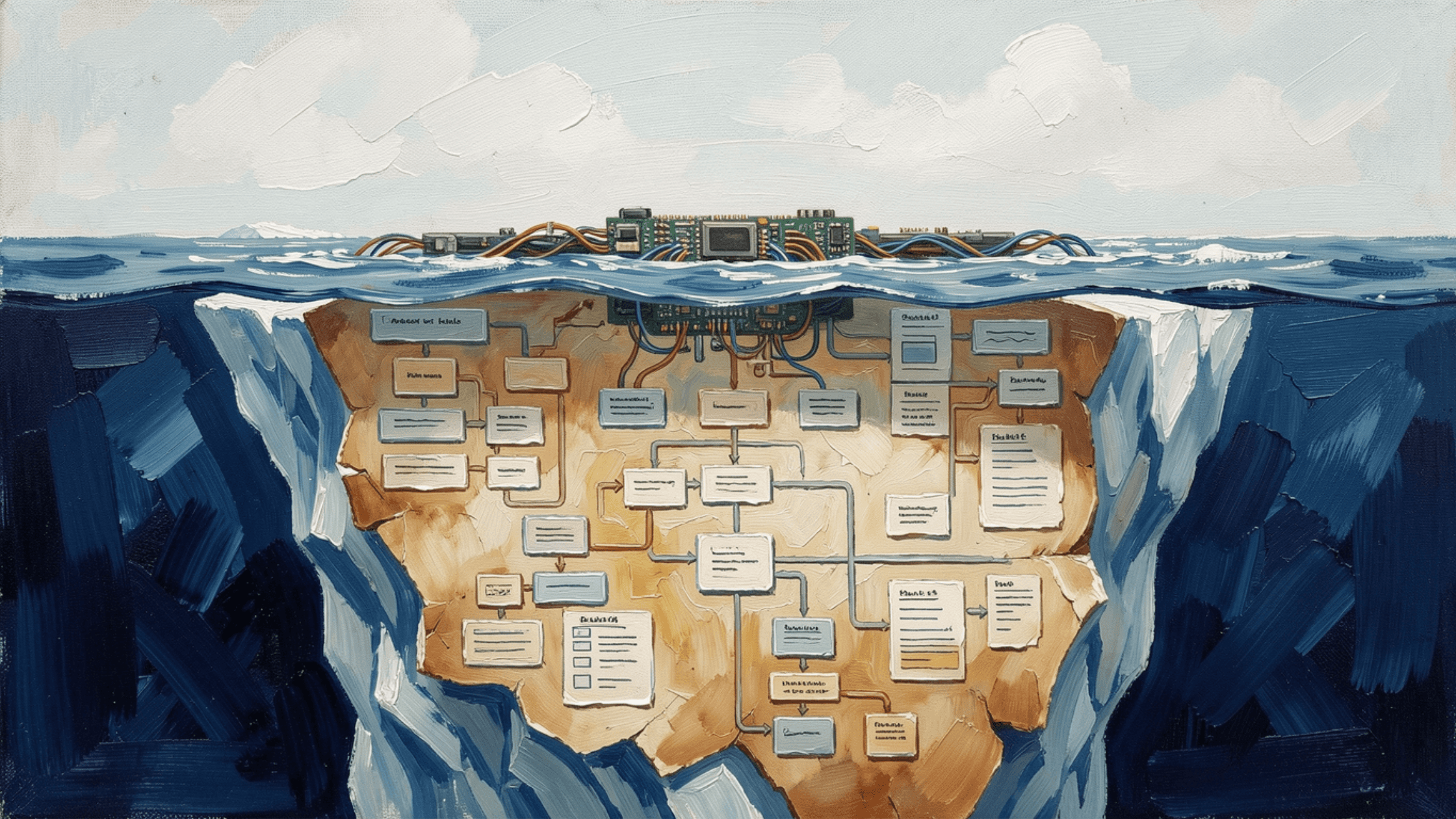

The technology isn't the problem. BCG's latest research puts it in precise terms: 10% of AI success comes from models, 20% from data and technical infrastructure, and 70% from organizational design, workflows, and cultural adoption.

Seventy percent. That means the majority of every failed AI project had nothing to do with the AI itself. It had to do with the organization trying to absorb it.

The 10/20/70 Framework: Where AI Actually Succeeds and Fails

BCG's "Reinvention of the CHRO" report, published in February 2026, introduced a framework that every enterprise leader needs to internalize.

10% is the model. Which large language model you use, how it's fine-tuned, what capabilities it has. This is where most enterprise attention goes. It's the least impactful factor.

20% is the data and technical infrastructure. Data quality, system integration, API architecture, compute resources. Important, but solvable with the right engineering investment.

70% is organizational. Role redesign. Workflow restructuring. Change management. Cultural adoption. Training and upskilling. Performance metrics. Career paths. This is where AI projects live or die, and it's where most enterprises invest the least.

The implication is uncomfortable but clear: if your AI strategy is primarily a technology procurement exercise, you're optimizing the 10% while ignoring the 70%. And the data shows what happens next: stalled pilots, underwhelming ROI, and the eventual budget cut.

What the 70% Actually Looks Like

Organizational readiness for AI agents isn't abstract. It's a set of concrete decisions about how work gets structured, how roles evolve, and how success gets measured.

Roles Change from Execution to Direction

When AI agents handle execution, human roles shift from doing the work to directing and reviewing it. A recruiter stops screening resumes and starts evaluating agent-curated shortlists. A financial analyst stops building spreadsheets and starts interpreting agent-generated analyses. A customer support rep stops answering routine questions and starts resolving complex escalations.

This isn't a minor adjustment. It requires rewriting job descriptions, rebuilding training programs, and redefining what "good performance" looks like in every affected role. The companies that treat this as a change management project succeed. The companies that drop AI agents into existing role structures without adjusting expectations create confusion, resistance, and attrition.

Workflows Need Redesign, Not Automation

The most common mistake is using AI agents to automate existing workflows without redesigning them. Automating a bad process just produces bad results faster.

The companies seeing real results are redesigning their workflows around what agents can do. Instead of "agent does step 3 of the existing 10-step process," it's "agent handles steps 1-7 autonomously, human handles steps 8-10." That's a fundamentally different workflow, and it requires rethinking handoff points, quality gates, and escalation criteria from scratch.

Metrics Must Evolve

If you measure an AI-augmented team using pre-AI metrics, you'll get misleading results. A support team that handles 50% fewer tickets isn't underperforming if the agent is resolving the other 50% autonomously and the remaining tickets are three times more complex.

The enterprises succeeding with AI agents are creating new metrics: agent resolution rate, human override frequency, time-to-escalation, agent accuracy by task type. These metrics reflect the reality of human-AI collaboration instead of pretending the old operating model still applies.

This Week's Evidence: The Operating Model Shift Is Happening

The theoretical framework from BCG is being validated by real organizational moves happening right now.

Accenture: Promotions Now Require AI Adoption

Accenture announced this week that promotions to senior leadership roles will require "regular adoption" of AI tools. Weekly logins to two in-house AI platforms, including AI Refinery (built with Nvidia) and SynOps, are being tracked. The company has already reskilled 550,000 workers on generative AI.

This is an operating model change, not a technology deployment. Accenture isn't just providing AI tools. They're restructuring incentives so that career advancement depends on AI integration. That's the 70% in action: changing how people behave, how they're evaluated, and what skills matter for advancement.

OpenAI's Frontier Alliance: The Deployment Gap Made Explicit

When OpenAI partnered with McKinsey, BCG, Accenture, and Capgemini on February 23 to push its Frontier agent platform into enterprises, the structure of the deal was revealing.

McKinsey and BCG handle strategy, operating model design, and change management. Accenture and Capgemini handle technical implementation and lifecycle support. The fact that the world's leading AI lab assigned half the alliance to organizational change, not technology, is a direct acknowledgment that the 70% problem is real.

OpenAI could have built better deployment tools. Instead, they hired the world's largest change management firms. That tells you where the actual bottleneck sits.

Deloitte's "Great Rebuild": The Structural Shift

Deloitte's Tech Trends 2026 report describes what they call "The Great Rebuild," where AI is restructuring technology organizations to be leaner, faster, and infused with AI at every layer. The tech function is evolving from service delivery to strategic leadership. Modular, API-first, self-service platforms are replacing monolithic systems.

Their conclusion: "Every organization studied is discovering the same truth. What got them here won't get them there."

That's the 70% in a single sentence. The org structures, processes, and operating models that built the current enterprise are not the ones that will operate the AI-augmented enterprise. Rebuilding them is the primary job.

Where CIOs Are Feeling the Pressure

The organizational challenge is creating measurable pressure on enterprise leadership.

A Dataiku/Harris Poll survey of 600 CIOs published in February found that 85% say their compensation is now directly tied to measurable AI outcomes. When personal incentives align with AI delivery, the organizational readiness gap becomes personal.

The same survey found that 73% regret a major AI vendor or platform decision made in the last 18 months. The regret isn't about the technology they chose. It's about underestimating the organizational investment required to make that technology productive.

And 71% believe their AI budget will face cuts or a freeze if ROI targets aren't met by mid-2026. That creates a ticking clock: demonstrate organizational readiness and production results within months, or lose the funding to continue.

Five Actions That Address the 70%

The enterprises successfully navigating this challenge share a pattern. They're investing as deliberately in organizational change as they are in technology.

1. Appoint an AI Operating Model Owner

Someone in the organization needs to own the operating model change, not the technology selection. This isn't the CTO's job (they own the 20%). It's a cross-functional role that coordinates between HR, operations, and technology to redesign how work gets done.

BCG's research frames the CHRO as the emerging owner of this agenda, describing them as "architects of a hybrid workforce" who integrate people with AI agents. Whether it's the CHRO or another executive, the role needs explicit authority over workflow redesign, role evolution, and adoption metrics.

2. Redesign Roles Before Deploying Agents

Don't deploy AI agents and then figure out how roles change. Define the target operating model first. What does the human do? What does the agent do? Where are the handoff points? What new skills does the human need? Answer these questions before the agent goes live.

3. Create New Performance Metrics

Build metrics that reflect the AI-augmented operating model. Agent resolution rate. Human override frequency. Escalation quality. Time-to-production for new agent capabilities. These metrics give leadership visibility into whether the organizational change is working, not just whether the technology is running.

4. Invest in Upskilling, Not Just Reskilling

There's a difference between teaching someone to use a new tool (reskilling) and teaching them to work in a fundamentally different way (upskilling). AI agent deployment requires upskilling: teaching people to direct agents, evaluate agent outputs, design agent workflows, and make judgment calls that agents can't.

Accenture's 550,000-person reskilling program is a start. But tracking weekly logins is a reskilling metric. The upskilling metric is whether those people can design, direct, and improve AI agent workflows independently.

5. Choose Platforms That Reduce Organizational Friction

The technology you choose determines how much organizational change is required. A platform that requires extensive custom development demands more organizational investment than one that provides pre-built agent capabilities with enterprise governance included.

The companies reaching production fastest are the ones that chose platforms that minimize the technical burden (the 20%) so they can concentrate resources on the organizational transformation (the 70%).

The Real AI Strategy Is an Operating Model Strategy

The enterprises that will define the next era of AI adoption are not the ones with the most advanced models or the largest AI budgets. They're the ones that redesign how their organizations work.

The 10/20/70 framework makes the priority clear. Spend 10% of your strategic attention on model selection. Spend 20% on data and infrastructure. Spend 70% on organizational design: new roles, new workflows, new metrics, new incentives, new career paths.

Every enterprise leader asking "which AI should we buy?" is asking the wrong question. The question is: "How does our organization need to change to make any AI productive?"

The technology is ready. The organizations, mostly, are not. Closing that gap is the defining challenge of 2026, and the companies that close it first will have compounding advantages that are very hard to catch.