Twelve months ago, the AI industry's answer to every performance ceiling was the same: make the model bigger. More parameters, more training data, more compute. Each generation cost more to train, more to run, and delivered smaller gains on the benchmarks that actually matter for enterprise work.

That trajectory is running into a wall. A peer-reviewed PNAS study published in 2025 found that frontier models are "barely more persuasive than models smaller in size by an order of magnitude or more," and that further scaling "may not significantly increase performance by more than 1 percentage point." OpenAI's GPT-4.5, the company's most aggressively scaled model, delivered qualitative improvements in subjective areas but nothing substantial in verifiable domains like math and science. The vast majority of high-quality public text data has already been consumed by training runs, and additional data is yielding diminishing returns.

The scaling era is not over, but its marginal gains are shrinking. And an alternative is emerging that changes the math entirely.

A 7B model that outperformed GPT-5

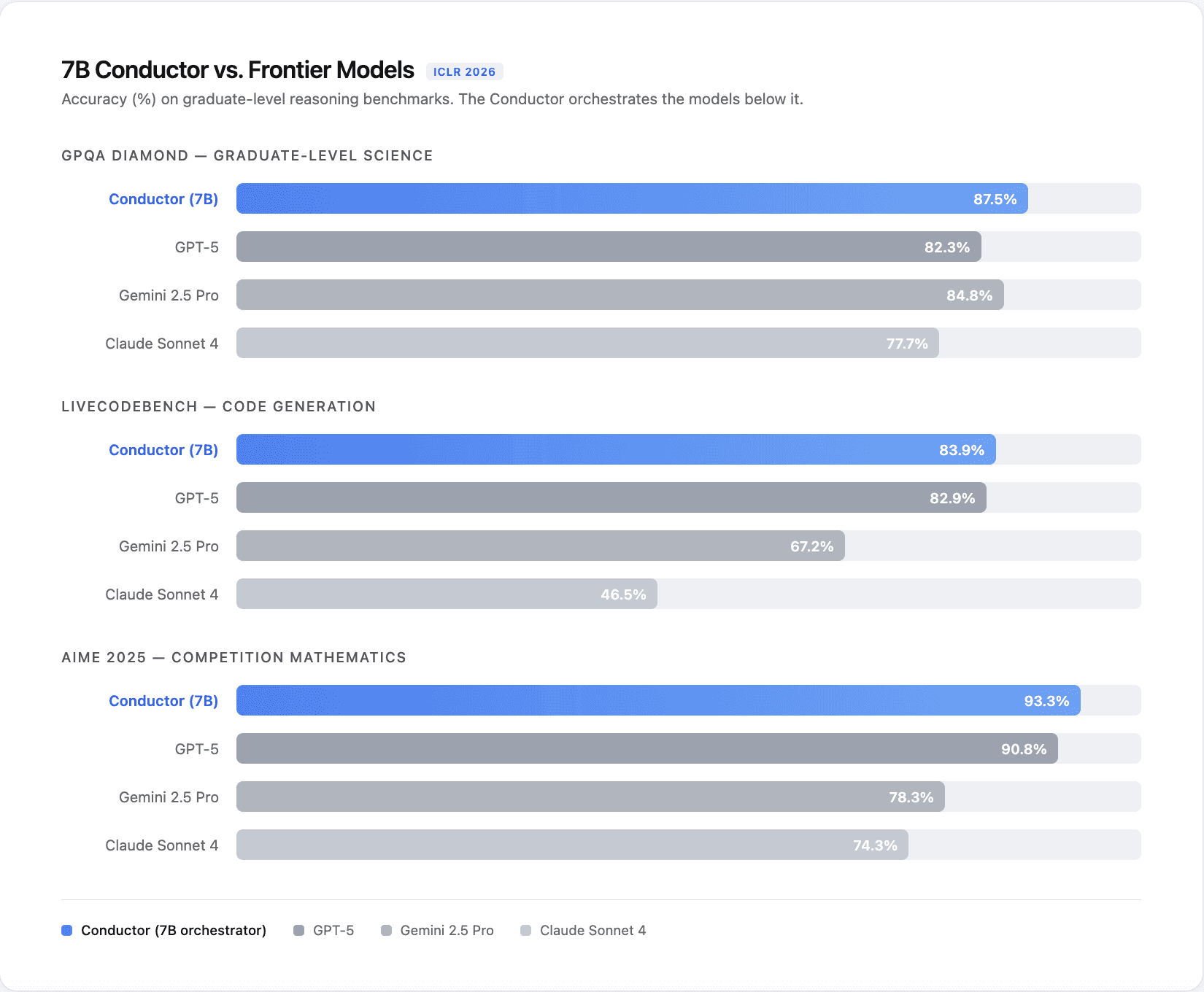

A team at Sakana AI recently published a paper at ICLR 2026 introducing what they call a "Conductor": a 7-billion parameter model trained with reinforcement learning to coordinate GPT-5, Claude Sonnet 4, Gemini 2.5 Pro, and several open-source models. The Conductor never solves problems itself. It divides them into subtasks, assigns each to the best-suited model, designs the communication pattern between them, and prompt-engineers focused instructions to each worker.

The results were striking. The Conductor scored 87.5% on GPQA Diamond where GPT-5 scored 82.3%. It hit 83.93% on LiveCodeBench where GPT-5 reached 82.90%. On AIME 2025, the math competition benchmark, it achieved 93.3% versus GPT-5's 90.8%. Across every benchmark tested, both in-domain and out-of-domain, the orchestrator outperformed every individual model it was managing.

A model roughly 200 times smaller than the ones it coordinated produced better answers by combining their complementary strengths rather than trying to know everything itself. That is not an academic curiosity. It is a direct challenge to the assumption that bigger always means better.

This pattern is not isolated. The Mixture-of-Agents paper, accepted at NeurIPS 2024, showed that layering multiple LLMs in collaborative rounds achieved a 65.8% win rate on AlpacaEval 2.0, compared to GPT-4o's 57.5%, an 8-point improvement from coordination alone. Separate research on efficient multi-model orchestration demonstrated a 21.7% accuracy improvement, 33% latency reduction, and 25% cost decrease versus random model allocation. The evidence is converging: coordinating existing models beats scaling any single one.

Why orchestration creates value where scaling cannot

Different models are good at different things. Claude handles nuanced reasoning and careful analysis. Gemini excels at multimodal tasks and broad knowledge retrieval. GPT-5 leads on certain coding benchmarks. No single model dominates across all categories, and the gap between them is exactly where orchestration creates value.

The Conductor paper revealed something even more interesting about how that value gets created. Through pure reinforcement learning, the model independently discovered coordination strategies that human engineers typically design by hand: task decomposition, specialist assignment, shared reasoning context, verification rounds, and iterative refinement. It learned to allocate more agents and more steps to harder problems while keeping simpler ones lean. It even learned prompt engineering, writing better instructions for GPT-5 than GPT-5 could write for itself.

That last point deserves attention. The model that is best at solving a problem is not necessarily the model best at defining the problem. Those are two separate capabilities, and separating them produces better results. This is the same principle behind how effective teams work: a good manager who knows how to delegate and frame problems well can get more out of a team than any individual contributor working alone, no matter how talented.

Beam's own analysis of the 19-model problem in enterprise AI showed that organizations relying on a single LLM for all tasks overpay by 40-85% compared to those using intelligent routing. Sending a simple lookup query to a frontier model costs roughly 30 times more than routing it to a smaller model that handles it equally well. Multiply that across thousands of daily tasks and the cost difference becomes a line item that finance teams notice.

Enterprises are already moving this direction

This is not a theoretical shift. Enterprises are voting with their budgets.

Gartner predicts that 40% of enterprise applications will embed task-specific AI agents by end of 2026, up from less than 5% in 2025. That is an 8x increase in a single year. Gartner also recorded a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025. A LangChain survey of 1,300 practitioners found that 57.3% already have agents in production, and multi-agent architectures grew 327% in under four months.

Those agents will not all run on the same model. They will need orchestration patterns that decide which model handles which step, manage context between agents, and adapt the workflow to the task at hand. JPMorgan Chase runs 450+ AI use cases in production, with its COiN system processing 12,000 credit agreements in seconds by routing different document types to different models. Microsoft's Build 2025 conference introduced multi-agent orchestration as a first-class platform capability, and HCLTech's deployment of it resolved cases 40% faster.

McKinsey estimates that agentic AI could add $2.6 to $4.4 trillion in value annually. The bulk of that value does not come from any single model being smarter. It comes from systems that coordinate multiple models, tools, and data sources to complete end-to-end workflows that no individual model can handle alone.

The recursive possibility

The Conductor paper's most forward-looking contribution is recursive orchestration. By allowing the Conductor to call itself as one of the worker agents, it creates a loop where the orchestrator can revise its own coordination strategy after seeing initial results. When GPT-5 performed unexpectedly poorly on BigCodeBench, the recursive Conductor automatically shifted its workflow to favor Claude and Gemini in subsequent attempts, improving from 37.8% to 40.0% without any human intervention.

This kind of adaptive, self-correcting coordination is what separates a production AI system from a demo. Production environments are messy. Models behave differently on different inputs. A fixed orchestration pipeline breaks when its assumptions fail. A learned, recursive orchestration layer adjusts on the fly.

For teams building agentic workflows today, this points to where infrastructure investment should go. The models will keep improving on their own. Every frontier lab is spending billions to make that happen. But how you combine, route, and coordinate those models is where competitive advantage actually compounds, especially as individual model capabilities continue to converge toward each other.

The real bottleneck was never model size

The AI industry spent the last three years in a parameter race. Bigger models, bigger clusters, bigger budgets. The evidence now suggests that race has a ceiling, and that the next wave of performance gains will come from building better systems around existing models rather than building bigger models from scratch.

A 7B orchestrator that outperforms GPT-5. Multi-agent systems that beat frontier models by 8 points on standard benchmarks. Enterprises saving 40-85% by routing tasks to the right model instead of the biggest one. The pattern is consistent across research labs, enterprise deployments, and production economics.

The models are commoditizing. The orchestration layer is not. And that is where the future of AI performance, cost efficiency, and enterprise value is being built right now.